The Ultimate Guide to Closed Captioning

A Brief Introduction

Making Video Accessible

Over 5% of the world’s population has disabling hearing loss.

With the massive proliferation of online video content, it’s become more important to make online video accessible.

More video content is being uploaded to the web in 1 month than TV has created in 3 decades.

How do you make online video accessible?

You guessed it: caption it.

Closed captions are key players in video accessibility. Without captioning, the 360 million people with hearing loss around the world would be unable to comprehend and engage with your video content.

Want to get started captioning? Fill out the form below and a member of our team will be in touch!

Sign up here

The Origin of Closed Captioning

It took 44 years since the invention of the television to add closed captioning to programs. The idea to make television accessible for the deaf and hard of hearing didn’t even sprout until 1970.

In 1972, at Gallaudet University, ABC and the National Bureau of Standards presented the technology necessary to make television accessible with captions.

It was revolutionary.

Later that year, captions were debuted for the first time to the public on Julia Child’s show, “The French Chef.”

Like a waterfall effect, captions started to appear on television, and by the turn of the century, closed captions had become a legal mandate for television.

What Is Closed Captioning?

Closed captions are a textual representation of the audio within a media file. They make video accessible to deaf and hard of hearing by providing a time-to-text track as a supplement to, or as a substitute for, the audio.

While the text within a closed caption file is comprised predominantly of speech, captions also include non-speech elements like speaker IDs and sound effects that are critical to understanding the plot of the video.

Closed captions are usually noted on a video player with a CC icon.

Types of Closed Captions

By now, you know what captions are. But how are they different from subtitles? And how are subtitles different from Subtitles for the Deaf and Hard of Hearing?

Let’s explore.

There’s a big difference between closed captioning vs subtitles, even though they are often used interchangeably.

Closed captions assume the viewer cannot hear. They are often dictated with a CC icon on video players and remotes.

Subtitles, on the other hand, are for hearing viewers who don’t understand the language of the audio. Their purpose is to translate the spoken audio into the viewer’s language.

Unlike captions, subtitles do not include the non-speech elements of the audio (like sounds or speaker identifications). Subtitles are also not considered an appropriate accommodation for deaf and hard of hearing viewers.

Captions vs. Subtitles

- Captions assume the viewer cannot hear. They are time-synchronized text of the audio content and include non-speech elements like noises.

- Subtitles assume the viewer can hear but doesn’t understand the language. Subtitles translate the audio into another language and don’t include non-speech elements.

In the United States, the distinction between closed captions and subtitles is important. However, in other countries – like in Latin America – closed captions are called subtitles.

Subtitles for the Deaf and Hard of Hearing (SDH), on the other hand, assume the viewer cannot understand the language and cannot hear.

Essentially, SDH subtitles combine the information conveyed by closed captions and subtitles – including critical non-speech elements.

![Three screens showing English CC([whispers] thank you), Subtitles (gracias), and SDH ([susurra] Graciac)..](https://www.3playmedia.com/wp-content/uploads/2022/06/Open-Captions-vs-closed-captions-e1753820989886.jpg)

Closed Captioning vs. Open Captions

The difference between closed captioning vs open captioning is based on user control.

Open captions are burned into the video and don’t give the user the control to toggle the captions on or off.

Alternatively, closed captions are added to a video as a “sidecar file” – they are a separate asset from the video.

When you order a file for encoding you can choose between closed captions or open captions.

Open Captions vs. Closed Captions

Open captions are burned into the video, cannot be turned off, and are used for offline or social media video. Closed Captions are published as a sidecar file, can be turned on or off by the user, and are used for online video.

Closed caption encoding allows the user to turn the captions on or off on offline videos. Open caption encoding burns the captions into the video, and whether the video is published online or offline, users can’t turn the captions off.

The easiest way to create open captions is to hire a professional captioning company that offers open caption encoding. Open caption encoding can be tricky to do yourself. It can be time-consuming and often requires expensive video software.

You may be wondering, why would someone want to use open captions?

Open captions are useful when video players don’t accept sidecar files. They are also helpful for social media. Platforms like Instagram, Twitter, and Snapchat, don’t allow users to upload a separate closed caption file. As a result, companies use open captions to ensure users are always in-the-know and their videos are accessible.

Other social media platforms like Facebook opt to autoplay videos on silent. Instead of having users scroll past a video, using open captions will help to quickly capture a viewer’s attention. In fact, Facebook uncovered that adding captions to videos increased view time by 12%!

Watch the Quick Start to Captioning webinar below!

All About Caption Quality

Why does closed caption quality matter?

Well, if you turn on the automatic captions on a video, then compare what is being said in the video with what is in the captions, you’ll probably notice a large discrepancy.

Sometimes the captions don’t make sense at all.

For a deaf or hard-of-hearing viewer, this can be very frustrating.

Closed caption quality matters because closed captions are meant to be an equivalent alternative to video for individuals with hearing loss. When closed captions are inaccurate, they are inaccessible.

Caption Quality

- Readable

- Do not obstruct important content in video

- Include speaker labels

- At least 99% accurate

- Follow DCMP, FCC, and/or WCAG standards

- 3Play Media best practices for caption quality

What is 99% Accuracy?

The industry standard for closed caption accuracy is a 99% accuracy rate.

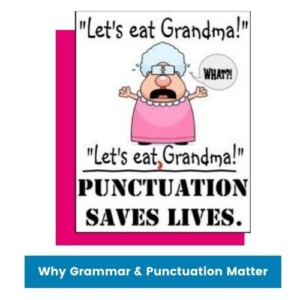

Accuracy measures punctuation, spelling, and grammar.

A 99% accuracy rate means that there is a 1% chance of error or leniency of 15 errors total per 1,500 words.

So, what happens if you have a lower accuracy rate?

Studies have shown that even a 95% accuracy rate is sometimes insufficient to accurately convey complex material. For a typical sentence length of 8 words, a 95% word accuracy rate means there will be an error, on average, every 2.5 sentences.

Most automatic speech recognition (ASR) technologies have an accuracy rate of 80%.

Knowing how a captioning vendor measures its accuracy rate is important. For example, with some closed captioning vendors, punctuation errors are subjective; even though an em dash, period, or comma could make all the difference to the meaning of a sentence.

Accuracy and Choosing a Captioning Vendor

Many vendors promise a 99% accuracy rate, but in reality, their accuracy falls short. Ask your captioning vendor the following questions to ensure you get the most accurate files back:

- Do you guarantee 99% accuracy on all files?

- How do you measure accuracy rates?

- Is the accuracy consistent regardless of the size of the file?

Automatic Speech Recognition (ASR)

Automatic Speech Recognition (ASR) is a technology that automatically translates the spoken words in a video, without human help.

ASR transcripts are often riddled with inconsistencies and lack important closed caption quality standards.

ASR is fast, cheap, and a good rough draft. To give your viewers an accurate transcript, it’s always best practice to review and edit ASR transcripts.

Why is ASR Not Good Enough?

- ASR only provides 80% to 95% accuracy, at best.

- ASR does not include non-speech elements, which are critical for comprehension.

- ASR does not include grammar or punctuation.

- Cheap services often mean low-quality transcription, which is common with many ASR services.

- Poor audio quality means poor transcription quality with ASR.

- Low accurate rates with ASR affect reading comprehension for viewers.

The Cost of Inaccurate Captions

Other than the inaccessibility of inaccurate captions, there are many reasons why closed caption quality matters.

Inaccurate captions can have detrimental effects on your viewers and on your brand.

1. Inaccurate captions mean more work for you.

Instead of focusing on other projects, you now have to spend more time trying to fix your captions.

2. Inaccurate captions also lead to miscomprehension of content.

Errors affect the reading comprehension of your content. Misspellings and incorrect words can be misleading to readers.

Closed caption quality is especially important in educational videos. Mistakes in the caption file can easily misinform a student.

3. Inaccurate captions also hurt your brand.

In a study by Global Lingo, 59% of respondents said they “would not use a company that had obvious grammatical or spelling mistakes on its website or marketing material.”

Grammar mistakes can hurt your credibility and cost you millions in sales.

Nowadays, your online appearance matters, and words are a major part of it. As a result, it’s imperative that you make a good impression by using accurate captions.

Closed Captioning Standards

The FCC, DCMP, and WCAG have outlined captioning standards to ensure that all closed captions are accessible for deaf and hard-of-hearing individuals.

Closed Captioning Standards

- Described and Captioned Media Program (DCMP)

- FCC Caption Quality Standards

- Web Content Accessibility Guidelines (WCAG) 2.0

The DCMP

The Described and Captioned Media Program (DCMP) is a set of guidelines for captioning best practices. The DCMP’s philosophy for captioning states, “all captioning should include as much of the original language as possible; words or phrases which may be unfamiliar to the audience should not be replaced with simple synonyms. However, editing the original transcription may be necessary to provide time for the caption to be completely read and for it to be in synchronization with the audio.”

According to the DCMP, caption quality is:

- Accurate: Errorless captions are the goal for each production.

- Consistent: Uniformity in style and presentation of all captioning features is crucial for viewer understanding.

- Clear: A complete textual representation of the audio, including speaker identification and non-speech information, provides clarity.

- Readable: Captions are displayed with enough time to be read completely, are in synchronization with the audio, and are not obscured by (nor do they obscure) the visual content.

- Equal: Equal access requires that the meaning and intention of the material are completely preserved.

Note: The DCMP guidelines are consistent with the FCC captioning guidelines.

FCC Caption Quality Standards

The FCC caption quality standards are based on accuracy, timing, completeness, and placement.

These closed captioning standards apply to pre-recorded, live, and near-live video programming.

With accuracy, the FCC states that closed captions must match the spoken words in the audio to the fullest extent. This includes preserving any slang or accents in the content and adding non-speech elements. For live captioning, some leniency does apply.

Closed captions must also be synchronized; they must align with the audio track and each caption frame should be presented at a readable speed – 3 to 7 seconds on the screen.

Completeness is also important. Closed captions must run from the beginning to the end of the program and not drop off.

Closed captions must also be placed so that they do not block other important visual content.

WCAG 2.0

The Web Content Accessibility Guidelines (WCAG) are a set of best practices for web accessibility. They cover everything from keyboard functionality, to video accessibility.

There are 3 versions of WCAG: WCAG 1.0, 2.0, and 2.1. The most widely used guideline is WCAG 2.0.

WCAG 2.0 has three levels of compliance: Level A, AA, and AAA. Level A is the easiest to complete, while level AAA is the hardest. Most web accessibility laws require compliance with Level A and/or AA.

WCAG 2.0 Level A and Level AA require captions for pre-recorded and live video, respectively.

WCAG 2.0 1.2.2 Captions (Pre-recorded) states that synchronized captions should be provided for all video with audio. When there is no audio, content creators must provide a note saying “No Sound is used in this clip.”

WCAG 2.0 1.2.4 Captions (Live) is a Level AA requirement. All live synchronized media must have captions and a mechanism for people to access those captions.

The 3Play Standard for Caption Quality

Legal standards for captioning can be vague, so we’ve outlined some best standards for caption quality focused on readability, closed caption placement, and speaker labels.

Readability

High-quality captions must be readable, otherwise, they aren’t accessible. The goal of captions should be to match the intent and tone of the audio so that deaf and hard-of-hearing individuals can comprehend the content.

The first element of readability is accuracy. The industry standard for caption accuracy is 99%. Accuracy measures grammar, punctuation, and spelling.

As we covered earlier, inaccurate captions are detrimental to the reading comprehension of your content. Errors as little as its versus it’s or as substantial as a misuse of words can largely affect the meaning of your content.

Captions will sometimes have errors; that’s why the standard for accuracy isn’t 100%. But having too many errors is inaccessible and unfair to viewers.

The next element of readability is grammar and punctuation. Grammar and punctuation make your content readable. It should also be consistent throughout a closed caption file.

Then there is caption frame and characters per line. Each caption frame should hold 1 to 3 lines (most are two lines) of text on the screen at a time.

Captions should be time-synchronized to the audio and last 3 to 7 seconds on the screen.

Caption Placement

Typically, closed captions are placed in the lower center of the screen but should be moved when important visual elements – like a speaker’s name – appear in the video.

Closed captions should also go away when there is a pause or silence in the audio.

Speaker Labels

Speaker labels are tremendously helpful for clarifying who said what, especially when there are multiple speakers on the screen.

If names are not known, using a generic label like “PROFESSOR,” “STUDENT,” or “SPEAKER” is acceptable, but, ideally, you would include the name of the speaker.

How to Add Captions

Not all video players are the same, which means that adding closed captions to videos can differ significantly from video player to video player.

In this section, we’ll explore how to add closed captions to your videos – from creating captions to uploading them.

How to Add Captions to Video

- Upload as a “sidecar” file

- Use open caption

- Encode your captions

- Use an integration or API workflow

There are four ways to add captions to videos: using a sidecar file, open captions, encoding your captions, and using an integration or API workflow.

The most common way to add captions to your online videos is to use a “sidecar” file. A sidecar file is a computer file with stored data, in this case captioning data.

Open captions are captions that are burned into a video file. Users can’t turn these captions off or on. Open captions are good for social video.

Caption encoding is when captions are embedded into the video and presented as a single asset. Caption encoding is intended for video that will be shown offline or on a platform that doesn’t support captioning.

Lastly, an integration or an API workflow is a way to automate the process of adding closed captions. Essentially, you are creating a link between your captioning vendor and video player to allow your captioning vendor to automatically post your captions back to the original video file.

Caption Formats

As we’ve mentioned, not all video players accept the same caption formats.

Some closed caption formats like an SRT or WebVTT are easier to create than others. We’ll cover a bit more about closed caption formats later, but for caption formats that require hex codes or are harder to create, we recommend using a professional captioning service or a caption format converter.

Open Captions

Open captions are burned into a video. These are helpful for social video, kiosks, or offline video.

The easiest way to create open captions is to hire a professional captioning service. Although more time-consuming, you can also create open captions using Adobe Premiere.

Creating Open Captions with Adobe Premiere Pro

- Create your caption file and save it as an SCC. Make sure to always proofread your transcript before encoding your captions!

- Import the caption file into your project in Adobe Premiere Pro. To do so, select File > Import. Select your .scc caption file. The caption file will appear in your project file as a video file with no audio. Drop the file into your video sequence.

- Enable closed captions in Adobe Premiere Pro. Click on the far upper right-hand corner of the program monitor where your video displays. Scroll down and clicked Closed Captioning Display > Enabled.

- Customize caption display (optional). Head over to the Captions Panel. Navigate to Window > Captions, and then select your caption file in the video sequence. Customize font color, background color, line breaks, timing, and caption placement.

- Burn the captions into the video. Open Caption Text to automatically burn the captions into the video when placed in the sequence and exported.

Caption Encoding

Caption encoding is when captions are embedded into a video and presented as a single asset.

Typically, you can order open caption encoding or caption encoding. Open caption encoding means the captions are burned into the video and can’t be turned off or on. caption encoding means the video will be viewed offline, but users can still toggle the captions on or off using the “CC” button.”

Integrations and API Workflows

Integrations and APIs link disparate systems or platforms to make it easy to share information and build workflows between the two.

With captioning, they help eliminate mundane steps when using a closed captioning vendor.

Essentially, you start by linking your captioning account to your video platform account. Once they are linked, all you have to do is order your captions via your closed caption provider’s account system or via your video platform. Your closed captioning provider will then automatically post your captions back to your video when they are complete.

Integrations and Choosing a Captioning Vendor

Integrations allow you to automate your captioning workflow. Choosing a vendor that offers integrations will save you a ton of time and make your captioning workflow easier. Here are questions to ask a vendor:

- Do you offer integrations?

- How do your integrations work?

- What platforms do you integrate with?

- How does a customer set up an integration?

DIY Workflows for Captioning

Budgets don’t always allow for one to rely on a professional captioning service. So, what’s a good workaround?

Well, you can create your own captions!

Yes, DIY captioning can be a time-consuming and labor-intensive process, but there are some tools that can help ease the burden.

Use the following tools to help you with DIY captioning.

DIY Captioning

- Create captions with YouTube

- Create captions from an existing transcript

- Transcribe your audio into text manually

- Use an automatic speech recognition software

Creating Captions with YouTube

Did you know more than 500 million hours of videos are watched on YouTube each day? YouTube is pretty much the king of video content on the internet. In fact, every 60 seconds, 72 hours of video are uploaded to the platform.

But how much of this content is captioned?

YouTube provides many free tools to help users close caption their videos. In fact, you can use these tools to help closed caption your own videos, whether or not you are posting them to YouTube.

YouTube offers free automatic closed captioning for videos uploaded to the platform. Since automatic captions are often riddled with inconsistencies, YouTube also provides a free editing tool.

And if you already have a transcript, YouTube will time code your closed captions to your video for free.

You can also easily download completed closed caption files in various formats, which you can then use to upload captions to other video players.

YouTube Closed Captioning

- From the Video Manager, select your video and click Edit > Subtitles and CC.

- Click English (Automatic) to pull up the automatic captions.

- To Edit these captions, click Edit. You can now select individual caption frames to edit. Your changes will populate in the video preview.

- When you are ready to download the closed caption file, select Actions > Download. YouTube allows you to download your closed caption file as a .sbv, .srt, or .vtt.

Always be careful with YouTube closed captioning and be sure to edit the final closed caption file before publishing. If you upload poor-quality captions, Google will flag your content as spam and penalize you in search results.

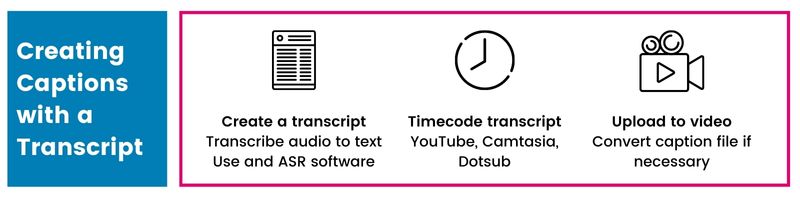

Creating Captions with a Transcript

Creating a closed caption file is way easier when you already have a transcript. If you don’t have a transcript, using automatic speech recognition software like Dragon, Dictation, or Camtasia can help you create a rough draft. Simply make sure to go back and edit it for accuracy.

With your transcript in hand, you’ll need to synchronize your transcript and video. We don’t recommend you create the timecodes yourself. Instead, use a program like Camtasia, Accessify Subtitle Horse, or YouTube to create the time codes for you.

Finally, you’ll need to make sure your transcript is in the correct caption format for the video player you want to use. Reference the caption and subtitle format list to check what your video player requires.

Once you have the correct caption format, you can upload it to your video. Hooray!

Creating Closed Captions Manually or with ASR

Creating closed captions from scratch can be a very time-consuming process, plus there is a lot of room for human error.

If you are transcribing a video yourself, the easiest way to do it is to use technology.

Using automatic speech recognition software like YouTube, Dragon, or Camtasia will provide you with a good rough draft that you can edit.

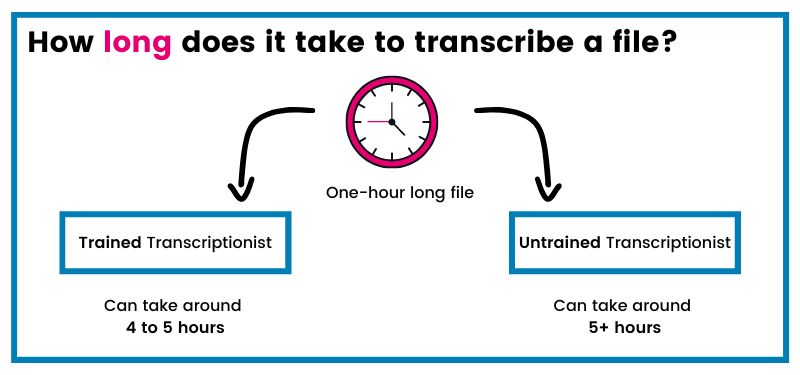

Adding technology into the mixture can cut your time by more than half. On average a trained transcriptionist can take four to five hours to transcribe one hour of audio or video content from scratch. For an untrained novice, this can take much longer.

Using software to timecode your closed captions will also save you a ton of time. Timecoding your closed captions yourself is never recommended as there is a lot of room for error.

Adding Captions to Social Video

Over the last couple of years, social video has grown to be the most popular form of content on social media.

Social video is a powerful tool for marketers, influencers, and organizations to engage with their audience and share interesting stories.

The major social video platforms are YouTube, Facebook, and Instagram. Twitter and Snapchat are also popular mediums to publish video.

While most social platforms don’t allow users to upload captions, it’s still possible and very beneficial.

Facebook uncovered that 80% of viewers reacted negatively to videos autoplaying with sound. As a result, they now autoplay videos on silent. Other social platforms, like Instagram, have followed in Facebook’s footsteps.

41% of videos are incomprehensible without sound or closed captions. This means that if you are not closed captioning your videos, viewers are most likely scrolling past your videos without playing them.

Platforms like YouTube and Facebook have mechanisms for viewers to add closed captions to videos.

On the other hand, Instagram, Snapchat, and Twitter require open captions.

Open captions are burned into a video file. They can’t be turned off or on by the viewer. The easiest way to create open captions is to go through a professional captioning vendor and order caption encoding.

How to Add Captions to Facebook

- Transcribe your video and save it as an SRT file.

- Upload captions to your Facebook under Edit Video.

How to Add Captions to Instagram, Twitter, and Linkedin

- Get your captions encoded (burned) into your video.

- Publish your encoded video and watch the likes the roll in!

Closed Caption Formats

There are a lot of caption formats out there. We know, it can get confusing.

Closed caption formats vary on the video player. Some are more legible than others.

Let’s break them all down.

DIY Captioning

- SubRip Subtitle (SRT)

- Web Video Text Tracks (WebVTT)

- Scenarist Closed Captions (SCC)

- Spruce Subtitle File (STL)

- Distribution Format Exchange Profile (DFXP)

- Timed Text Markup Language (TTML)

- Society of Motion Picture and Television Engineering – Timed Text (SMPTE-TT)

- CAP

- Captionate XML (CPT.XML)

- Powerpoint XML (PPT.XML)

- European Broadcasting Union subtitles (EBU.STL)

- RealText (RT)

- Synchronized Accessible Media Interchange (SAMI or SMI)

- SubViewer (SBV or SUB)

- Adobe (ADBE)

- Apple XML Interchange Format (Apple XML)

- Avid (AFF or Avid DS)

- MacCaption (CCA, MCC, or MCC V2)

- ONL – CPC 715

- Crackle Timed Text (Crackle TT -variant of SMPTE-TT)

- DECE CFF (Variant of SMPTE-TT with auxiliary PNG files)

- Evertz ProCAP

- iTunes Timed Text (ITT)

- Matrox for MX02 (Matrox4VANC)

- Multiple CC (Multiplexed SCC)

- XML file (Rhozet)

- Sony Pictures Timed Text XML (SonyPictures TT)

- Texas Instruments DLP Cinema XML (TIDLP Cinema)

- Windows Media timed text file (WMP.TXT)

- LRC (.lrc) – No styling, but enhanced format supported.

- Videotron Lambda (.cap) – Primarily used for Japanese subtitles.

There are dozens of closed caption formats to choose from – we know, it can get confusing. Luckily, every format has a purpose, and chances are you’ll only have to deal with a couple.

To make this more digestible, let’s break down every closed caption format by simple, intermediate, and advanced.

Simple Closed Caption Formats

Simple closed caption formats are the SRT and WebVTT files of the closed captioning world. These formats are used for online video.

Most popular video players and lecture capture systems use simple closed caption formats.

SRT and WebVTT files are fairly simple to create by hand. They both read like a script – ie. you can read each closed caption frame, which is separated by timecodes.

Here are the simple closed caption formats you should know, their compatibility, and their use cases.

| File Format | Use Case | Compatibility |

|---|---|---|

| “SubRip Subtitle” file, SRT, or .srt | – Easy to create on your own. – Accepted by more media players, lecture capture software, and video recording software | Facebook, YouTube, Windows Media Player, Wistia, Slideshare, Adobe Presenter, Camtasia, Kaltura, Mediaspace, Flowplayer, thePlatform, MediaCore, Mediasite, Blip.tv, Desire2Learn, Vidyard, VLC, & more… |

| “Web Video Text Tracks,” WebVTT, .vtt | – WebVTT is used to cloud-based videos, HTML5 media players, and video management systems. – WebVTT accomodates audio description, text formatting, positioning, and redering options. | Vimeo, Brightcove, JW Player, MediaCore, MediaPlatform, Video.js, YouTube, & more… |

| “Distribution Format Exchange Profile,” DFXP, .dfxp | – Timed-text format – Limited use in many online video providers because it is not CVAA compliant | Adobe Flash, Flowplayer, Kaltura, MediaSpace, Limelight, Ooyala, Panopto, YouTube, & more.. |

| “Subviewer,” SBV, SUB | – Simple YouTube file format that doesn’t recognize style markups – Similar to SRT | YouTube |

| “RealText,” RT, .rt | – Timed-text file for RealMedia – Consumes minimal bandwidth and streams quickly | RealPlayer, RealOne Player |

Intermediate Caption Formats

Intermediate caption formats are reserved for broadcast and streaming media. These file formats are on another level than SRT and WebVTT files.

An example of an intermediate caption format is the SCC, or “Scenarist Closed Captions.” The SCC is used for broadcast and web video, as well as DVDs and VHS videos.

Trying to read an SCC file is like trying to make sense of the numbers in Lost – the numbers make no sense, but they do have some meaning.

Here is a list of the intermediate closed caption formats you should know, their compatibility, and their use cases.

| File Format | Use Case | Compatibility |

|---|---|---|

| “Scenarist Closed Captions,” SS, .scc | – Broadcast video and web video – DVDs and VHS videos – Based on CC data for CEA-608 (Line 21 or EIA-608 broadcast data) – the standard transmission format for CC in North America | Adobe Encore, Adobe Premier Pro, Apple Compressor, DVD Studio Pro, Final Cut Pro, iTunes, YouTube, & more… |

| “Society of Motion Picture and Television Engineering – Timed Text,” SMPTE-TT, .xml | – Compliant with FCC closed caption regulations for broadcasters – Reference video frames instead of video time – Allows captions to include attributes like foreign alphabet characters and mathermatical symbols – Supports positioning capabilities | YouTube, Netflix, Amazon Video, Crackle, Microsoft Media Platform’s Player Framework, Yahoo, AOL, Brightcove, Open DCP, Adober Premier, Open Source Media Framework, Apple HTTP Live Streaming, Flowplayer, SubtitlePlus, Subtitle Edit |

| “European Broadcasting Union Subtitles,” EBU.STL | – Common subtitle/caption file format for PAL broadcast media in Europe | Used for professional video editing systems like Avid |

Advanced Caption Formats

Advanced closed caption formats are used for more advanced closed captioning needs – like closed caption placement or stylistic preferences.

These are obviously, a lot harder to create.

Here is a list of the advanced closed caption formats you should know, their compatibility, and their use cases.

| File Format | Use Case | Compatibility |

|---|---|---|

| “Captionate XML, CPT.XML | – Caption encoding on Flash Video | Captionate, Adobe Flash |

| CAP, .asc, .cap | – Broadcast media – Can accomodate characters in many languages for international use | Professional video editing systems |

Deep Dive: SRT and WebVTT Closed Caption File Formats

SRT and WebVTT closed caption files are the most widely used web video formats. These are easier to create by hand, but both are very different from each other.

SRT Files

SRT (.srt) files are the most common type of closed caption file format. SRT stands for “SubRip Subtitle” file.

SRT files read like a script.

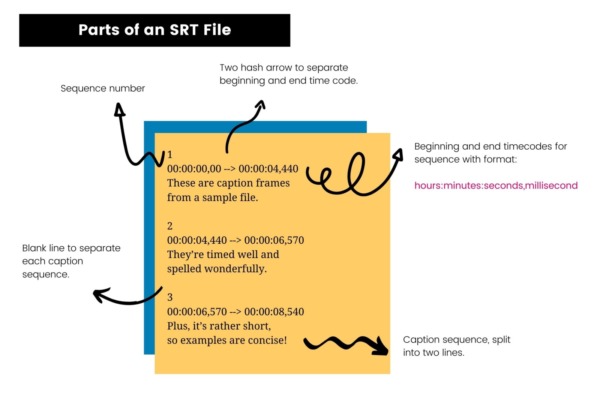

An SRT file includes:

- The number of the closed caption frame in sequence

- Beginning and end timecodes for when the closed caption frame should appear

- The closed caption itself

- A blank link to indicate the start of a new closed caption sequence

If you want to create your own captions, an SRT file is the way to go. You can easily create one using TextEdit (Mac) or Notepad (Windows).

Follow the parts of an SRT file graphic below to learn how to format the file. Keep in mind that the timecodes are very specific – to the last millisecond. Coding these yourself will be very time-consuming, so we recommend you write the script first, then timecode it using software.

WebVTT Files

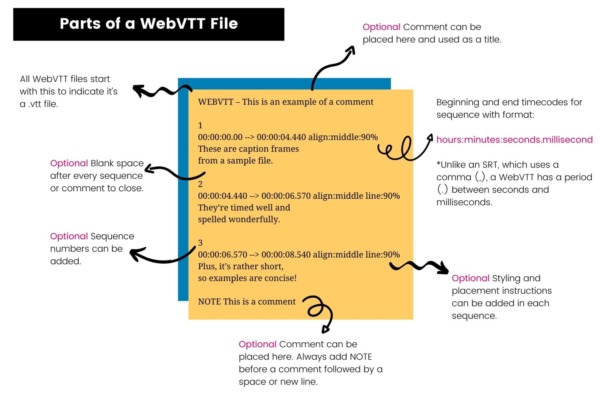

A “Web Video Text Track” file, also known as a WebVTT (.vtt), is another popular file format people use. WebVTT files are used for HTML5 video captioning.

Unlike SRT files, WebVTT files allow for description and metadata information.

WebVTT files are used because they give video creators greater flexibility. You can easily add information such as frame placement, styling, and comments.

A WebVTT file has two requirements and many optional components.

The two requirements are:

- WEBVTT at the beginning of the transcript

- A blank line in between each closed caption frame. In WebVTT, a blank line indicates the end of a sequence.

The optional components are:

- A byte order mark (BOM): tells the reader this file is encoded with UTF-8. An example of a BOM would go something like: EF BB BF.

- A header to the right of the WEBVTT. There must be a single space in between, and must not include a newline or “– –>”. You can use this to describe the file.

- Comments: indicated by NOTE and on separate lines.

- A sequence number: these are optional but can help keep your captions organized.

- Positioning information: included on the same line after the second timecode.

You can easily create WebVTT files using TextEdit (Mac) or Notepad (Windows).

Cost of Closed Captioning

The cost of closed captioning can vary depending on vendor and process.

Whether you are closed captioning in-house or using a professional closed captioning vendor, there are costs to closed captioning.

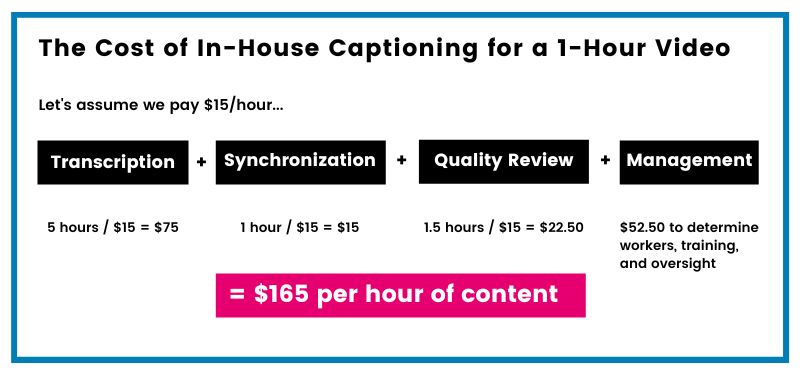

There are four important steps in a closed captioning workflow: transcribing the video, synchronizing the text, controlling quality, and managing the overall process. All these steps impact the final cost of your closed captions.

Cost of Closed Captioning

The cost of closed captioning depends on the process. You can either closed caption in-house or outsource your captions.

A common misconception is that using a closed captioning vendor will cost you more, which is why many organizations caption in-house. But using un-trained transcribers – like students – can actually be more costly as they can take longer to caption a single file.

With outsourcing, the cost of captions can vary from $1-$27 per minute. For a cost-effective and high-quality solution, opt for a vendor that uses a mix of technology and human editing.

Lastly, be careful with lower quality solutions or relying on ASR-only. The error rates in the files you get back mean that you will have to spend more time and resources correcting the files – costing you more.

In-House Closed Captioning

Many people opt to caption in-house to save money. The assumption is that professional closed captioning services are expensive.

But is it really cheaper to close caption in-house than use a professional closed captioning vendor?

Let’s calculate.

In-house Transcription Costs

The first step in closed captioning is to transcribe the video. This is often the most time-consuming part. A trained transcriptionist will take four to five hours to transcribe one hour of normal audio or video content.

While larger corporations may have the means to hire trained transcriptionists, smaller organizations with smaller budgets typically have students or interns transcribing.

As an untrained transcriptionist, a student or intern can take five hours or more to transcribe a one hour file. If this student is paid $15 per hour, this means it will cost $75 to transcribe a one-hour-long file.

In-house Timecoding Costs

Next, you have to synchronize the closed caption file. This is often the hardest part because you have to make sure that each caption frame aligns with the audio.

There are free tools that will help time code transcripts like YouTube, but for this analysis, we will assume that synchronization adds an additional hour to the process.

Now the cost rises to $90 per hour of content.

In-house Quality Control Costs

Next, we have quality control. The goal is to have accurate closed captions that are accessible and comprehensible.

Good quality closed captions use proper grammar, punctuation, and spelling. They are also synchronized correctly, readable, and don’t obstruct important content on the screen.

Quality control involves two steps: training employees on correct closed captioning standards and reviewing closed caption files for errors.

A good quality check should take longer than the duration of the actual file. So for an hour and a half of quality check the total in-house cost of closed captioning rises to $112.50 per hour of content.

In-house Operations Costs

Finally, we must add the cost of managing operations. Operations managers are in charge of training, finding students, and scheduling oversight.

A supervisor is paid around $25 per hour. Assuming it takes around 2 hours for training and oversight, this brings the cost to $165.

In-house Final Cost

A conservative estimate for in-house, large-scale closed captioning operation averages $165 per hour of video, which is more than double the cost of hiring a professional closed captioning service.

In-house closed captioning works when you have short videos. In fact, many universities, like the University of Arizona, implement a hybrid approach to captioning.

Outsourcing Closed Captioning

A common misconception people have about outsourcing closed captioning is that it’s expensive. When the cost to caption a 30-second Superbowl commercial is $200 (which is really only a fraction of the $4 million spent buying the ad space), it explains why people have these misconceptions.

The truth is that closed captioning prices really come down to the vendor’s process. Cheap closed captions may be enticing, but the quality could be detrimental.

ASR

Using automatic closed captions on their own is not good enough.

ASR closed captions are riddled with inconsistencies and errors; they are highly inaccessible.

ASR closed captions are easy and often free. For example, YouTube and Facebook both offer automatic captions for videos, so by default, many people opt to use them.

On average, ASR can cost $0.25 per minute. But cheap is cheap, so if you do opt to use ASR, make sure to double – maybe even triple – check your transcript before publishing.

Below is an ASR transcript.

Play the audio & read along. What errors can you find?

Crowdsourcing

Crowdsourced transcription companies distribute your audio or video files among a large number of people. A single audio or video file is either segmented into smaller parts or assigned as one file.

This can be cheap and sometimes fast, but it can also be very risky.

Similarly, other people use the Amazon Mechanical Turk, where you upload content to the platform, and a freelancer picks it up.

Through the Mechanical Turk, you dictate your own price, which can easily add up if you are using multiple workers.

The Risks of Crowdsourcing Captioning

- Transcriptions lack training

- Can be expensive and not worth the cost due to inaccuracies

- Files can have inconsistencies

- Security risks

- Lack of legal compliance

- May require internal quality assurance

So, what’s the cost of crowdsourcing closed captioning?

While in many cases the price you pay is low, the consequences of using a low-quality file are pricey. For instance, you have to QA the file yourself, which takes up time away from other tasks. There are also additional costs if you resubmit a file, or order a certain closed caption format.

Using a Closed Captioning Vendor

There are many closed captioning services to choose from, but not all are created equal.

When selecting a closed captioning vendor, you want to make sure you find one that is reliable, accurate, and cost-effective.

Different vendors have different processes for closed captioning. The process will directly correlate to the price. Although it can be enticing to go for the cheaper option, the quality of the closed captions you get back might not be worth it.

So, what should you be looking for in a closed captioning vendor?

1. Accuracy rate

We talk a lot about accuracy because it’s critical to the comprehension and accessibility of your closed captions. When looking for a closed captioning vendor, you want to ensure they guarantee a 99% accuracy rate on all files, regardless of size. They should also have a clear method for measuring their accuracy. If you notice too many inconsistencies in the files you are getting back – it may be time to look for a new vendor.

2. Process

As we covered earlier, the captioning process directly impacts the file accuracy and price.

You want to look for a vendor that offers high-quality at a good price. Ask your vendor the following questions:

- How do you close caption files?

- Are single files split between multiple transcriptionists?

- Are transcriptionists trained on quality standards?

- Does the vendor have a quality review process?

3. Difficult content

Finding a vendor who can handle difficult content – while upholding a 99% accuracy rate – is especially important for colleges and universities. More advanced subjects like physics, calculus, philosophy, law, and medicine have unique terminology that is critical for student comprehension.

Make sure your vendor can handle that complex content. If you can upload glossaries with important terms, that’s a huge benefit.

4. Turnaround

Always ask your vendor about their turnaround times. In particular, ask about their standard turnaround. Knowing this ahead of time can help you plan your closed captioning projects more effectively.

5. Integrations

Choosing a vendor that integrates with your video platform will save you a lot of time.

Ask a vendor how their integrations work and if they are easy to set up.

6. Workflow

A non-user-friendly workflow can cause a lot of headaches.

A good closed captioning vendor will have a clear workflow. They will offer different methods to upload videos, they will let you know when closed captions are ready, and they will store your closed caption files for you.

7. Cost

Closed captioning costs can add up quickly. Look for a vendor who is transparent about their costs, and can help you stay within your budget.

When talking to a vendor, ask them:

- What’s your pricing model? Do you charge per minute or per file?

- Do you charge fees for having multiple speakers? Or for having speaker identifications?

- Do you charge extra fees for certain closed caption formats?

- Do you require a setup fee?

- Do you offer bulk discounts?

8. Account System

Does your closed captioning vendor allow for subaccounts? How does invoicing work? Can you pay electronically? Can you handle multiple users?

These are all important questions to ask when selecting a closed captioning vendor.

You want a vendor who can customize the account system to your needs.

9. Support

When your closed captions are due now for your Netflix show releasing in an hour, a good support system is vital.

When asking about a vendor’s support, look into how is it made available, how fast they respond, and if they have documentation available.

How to Save Money on Closed Captioning

Closed captioning is essential to making your videos accessible, but it doesn’t have to deplete your whole budget.

There are many solutions you can implement to save on closed captioning. Simply find the solution that works best for you.

Apply a Hybrid Solution

A hybrid approach to closed captioning is when you do shorter work in-house and outsource longer files to a professional closed captioning service.

This technique has helped many organizations, like the University of Arizona, to save a ton of money on closed captioning.

So, what’s considered a short versus long video?

Typically, organizations will caption in-house videos that are 5-minutes or less. Longer videos tend to take more time and resources, so it’s more cost-effective to outsource them for closed captioning.

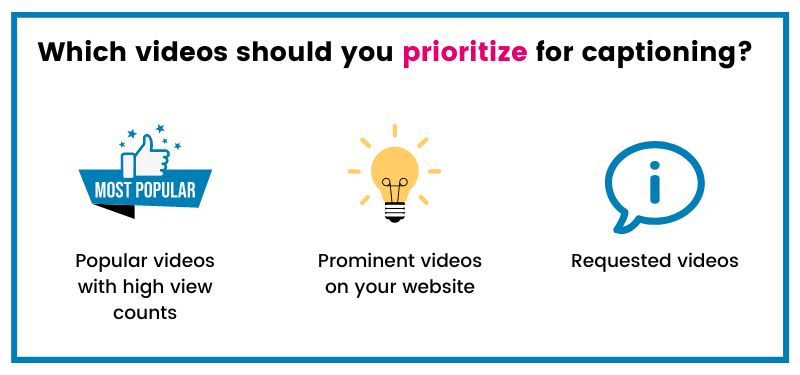

Prioritize Videos

Even though your goal should be to caption all your videos, sometimes it’s not economically feasible.

Instead of not closed captioning at all, try prioritizing your popular videos for closed captioning. Caption videos that have the most views, shares, or engagement; caption videos that are in more prominent places, like on your homepage; and caption videos requested by viewers.

If you caption on YouTube, you can use the YouTube Closed Caption Auditor to see which videos get the most traffic.

Once you have your important videos captioned, you can begin closed captioning other videos.

Note: You should also prioritize videos that people request to be captioned.

Making the Most of Turnaround Options

Quicker turnaround options can make closed captioning costs add up. Sometimes, you may need a closed caption file within 2-hours or by the next day, but if you can avoid having a rushed turnaround time, you can actually save a ton of money.

Furthermore, some closed captioning vendors will offer discounts for extended turnaround times (ie. longer turnaround than the standard).

The best way to stay on top of your closed captioning turnaround is to integrate closed captioning into the project timeline.

Vendor Charging & Bulk Discounts

If you have a lot of video content to caption, using a vendor that offers bulk discounts can save you some money.

Another way to save money is to ensure your vendor doesn’t round up the cost of closed captioning. Some vendors will charge you for 4 minutes of closed captioning, even if your video was 3:39 minutes long. For smaller files, this might not be a big difference, but as you caption more you can save yourself more money this way.

Create a Closed Captioning Budget

Don’t have a closed captioning budget? It might be time to create one.

Having a closed captioning budget will help you stay on top of how much you are closed captioning, as well as ensure that you are using your funds efficiently.

There are many creative ways you can use to find the money for closed captioning.

If you are an educational institution, you can insert a closed captioning fee into the student tuition fees. This strategy has helped NC State raise over $60,000 a year for closed captioning.

If you have leftover funds from other budget initiatives, you could use those to help create a closed captioning budget as well.

The key is to assert that you are making a commitment to closed captioning.

How to Get Buy-In for Closed Captioning

The number one barrier to closed captioning is cost and budget.

As we’ve discussed above, closed captioning costs can rack up quickly, but it shouldn’t be a barrier to accessibility.

To get buy-in for closed captioning you’ll need to first build awareness, then show it’s a worthwhile investment. Follow these tips to help build awareness at your company.

1. Build Awareness

Getting buy-in for closed captioning takes work. In a study we conducted on the state of captioning, we uncovered that the true decision-makers for funding closed captioning are often unaware they are required to caption.

This means that educating your higher-ups or decision-makers is the first step to getting buy-in for closed captioning.

At Netflix, they use a bottom-up approach to get buy-in. Because accessibility is a passion of many of the employees there, they got together and actually brought it up to the higher-ups.

How to Build Awareness for Accessibility

- Host meetings or lunch & learns about accessibility best practices.

- Share end-user commentary from accessibility implementation.

- Send out a company or team accessibility newsletter.

- Attend accessibility-focused events and invite colleagues.

Education is a powerful tool. If more people at your organization realize the benefits and importance of closed captioning, more people will work to create a budget to help execute it.

2. Demonstrate the ROI

Step two is to demonstrate the ROI of closed captioning.

Closed captioning has been proven to increase engagement, boost SEO, and add brand value.

ROI: SEO

Google ranking bots can’t watch videos, that’s why you need captions to tell them what your content is about.

PLYMedia, an ad-tech and online marketing company, saw a 40% increase in views after adding closed captions to their videos.

Closed captions are crucial for improving your SEO and they help your videos stand out among the clutter.

ROI: Engagement

The Journal of Academy of Marketing Science found that closed captions improve brand recall, verbal memory, and behavioral intent.

Facebook uncovered that 85% of its videos are watched without sound. As a result, they now autoplay videos without sound.

If your video lacks closed captions, more often than not, people are going to scroll past it.

ROI: Brand Value

Oath is a communications company that houses brands like Yahoo!, AOL, and HuffPost.

Oath’s brands produce a lot of video content. When it came to video accessibility, there was no question of whether to close caption or not.

Instead, they looked at their company’s values and culture. Oath values its commitment to inclusion. As a result, getting buy-in for closed captioning came from honoring that commitment, as well as realizing the ROI of closed captioning.

Oath saw closed captioning as a part of their overall goal of ensuring everyone has access to the content they produce.

3. Suggest doing a pilot project

If you are still having trouble getting buy-in, suggest doing a pilot project.

A pilot project should have a defined beginning and end, a budget, and a way to measure the impact of closed captioning.

You should monitor how people respond to the addition of closed captions, and look at the performance of the video. Did views increase? Were your videos easier to find?

Once your pilot project is over, you’ll be able to present a strong case to get buy-in for closed captioning.

Benefits of Captions

The reality is most people aren’t captioning their online video content.

While they may recognize that captions are essential for accessibility, something still keeps them from closed captioning.

More often than not that something is budgeting.

But investing in captioning can actually reap many benefits that go beyond accessibility.

In this section, we explore the benefits of closed captions for your viewers, videos, and brand.

Benefits of Captioning

- Accessibility

- Legal protection

- Video SEO

- Better focus, engagement, and memory

- Greater flexibility for viewing content

- Easy to create derivative content

- Translations

- Combat silent autoplay on social video

Accessibility

Closed captions were created as an accommodation for deaf and hard-of-hearing viewers.

With more than 48 million Americans who have some degree of hearing loss, captions are essential for allowing your message to reach the masses.

20% of Americans are deaf or hard-of-hearing. Most of these individuals (60%) are either in the workforce or in an educational setting.

71% of people with disabilities leave a website immediately if it is not accessible. With the advent of the internet, a lot of disabilities are invisible to content creators – we can’t see the people surfing or consuming our content.

Hearing loss is often an invisible disability. Proactively captioning your content will ensure that all of your viewers can enjoy your content.

Legal Protection

What do Amazon, Netflix, and FedEx have in common?

One word: captioning.

Amazon, Netflix, and FedEx have all been sued for lack of captioning.

For most video producers, you are required to caption by law. Failing to close caption your content could result in costly legal fees and damage to your reputation.

Key Captioning Lawsuits

Online Video: Netflix, Hulu, and Amazon were sued for lack of captions on their videos.

Education: MIT and Harvard were sued for providing inaccurate captions on public-facing videos.

Corporate: FedEx was sued for failing to caption online employee training courses.

Video SEO

Video SEO means optimizing your channel, playlists, metadata, description, and videos for search.

Search engine bots can’t see a video or listen to audio, but they can index text. Captions and transcripts provide a text version of your video so search engine bots can crawl and properly index your video.

As a result, you get more search engine traffic, your videos rank higher, and they rank for more diverse keywords.

According to Facebook, videos with captions have 135% greater organic search traffic.

Better Focus, Engagement, and Memory

There is an ancient Chinese proverb that goes, “I hear, and I forget; I see, and I remember.”

Captions can help you remember.

In fact, a research study by the University of Iowa found that people recalled information better after seeing and hearing it.

Captions are visual aids of the words being spoken. They can help children with reading comprehension, students with understanding the content, and ESL individuals with spelling and pronunciation.

When there is complex language, poor audio, or complicated information, captions can clarify the content for your audience.

Captions can also help grow your brand awareness.

A research study from the Journal of the Academy of Marketing Science found captions improve brand recall, verbal memory, and behavioral intent.

Greater Flexibility for Viewing Content

We live in a world where everyone seems to always be on the go – often face down, looking at their phones… watch out!

Thanks to smartphones, people have access to video content 24/7. In fact, Cisco reported that mobile video content generated the majority of traffic growth in 2017.

People are watching videos at all hours, with and without headphones.

Captions are important in order to make your video comprehensible without sound. 41% of videos are incomprehensible without sound or captions, which means that if someone doesn’t have headphones, they won’t watch your video.

Easy to Create Derivative Content

With closed captions, you also get a transcript of what is being said in the video. As a result, you can easily scan the content of the video to easily create derivative content from it.

You can easily create short clips, infographics, white papers, podcasts, and more!

Translations

With captions, it’s also easier to create translations into other languages. The internet is a global place, which means that people from all over the world are accessing your content.

Translating your content is a great way to expand your reach. 80% of YouTube views come from outside the United States. In fact, 67.5% of YouTube views come from non-English speaking countries.

How to Auto Translate Captions on YouTube

1. Under Video Manager, click Edit under the video you want to translate.

2. Next, select the Subtitles/CC tab at the top of the video editor.

3. When you select Add new subtitles or CC, a search bar will appear. Search for the language you want to translate to.

4. A new menu will appear on the left. Select Create new subtitles or CC.

5. You’ll be taken to YouTube’s caption editing interface. Above the transcript, you’ll see two tabs: Actions and Autotranslate. Select Autotranslate.

6. Your translations will appear under the original script. You can easily edit them by clicking on the translated version.

7. Finally, select Publish!

Combat Silent Autoplay on Social Video

According to Facebook, 80% of viewers react negatively to videos that autoplay with sound.

As a result, it’s led to a rise in social videos autoplaying on silent. You’ll notice this particularly when you are browsing on your phone on Facebook, Instagram, and YouTube.

Now, if you aren’t captioning your videos, your audience will just scroll past them if they don’t have the sound on because they can’t understand it.

Captioning Laws

Nowadays, everyone is using video to tell their story. But while it’s a powerful medium to engage viewers, video falls short of being accessible for everyone.

Captions are a critical part of video accessibility, as they make video accessible to deaf and hard-of-hearing individuals.

Captioning laws can be a bit confusing. For starters, not everyone is required to caption.

On the other hand, those who are may be required to caption by multiple laws, and even though they are complying with one law, it doesn’t mean they comply with all the laws.

Confused? Fear not! Below we’ll outline all the laws you need to know and how they apply to you.

Captioning Laws

- The Rehabilitation Act – Section 504 & Section 508

- The Americans with Disabilities Act (ADA) – Title II & Title III

- The Federal Communications Commission (FCC)

- 21st Century Communications and Video Accessibility Act (CVAA)

- State Laws

The Rehabilitation Act

The Rehabilitation Act is a federal anti-discrimination law.

Under the Rehabilitation Act, Section 504 and Section 508 apply to online accessibility. Not all organizations are required to adhere to both Sections.

Section 504

This law applies to:

- Federal programs and activities

- Federal agencies

- Programs or activities receiving federal financial assistance*

- the Executive agency

- United States Postal Service

*This includes airports, state houses, public schools, colleges and universities, federally assisted housing, public libraries, police stations etc.

Section 504 states that individuals with disabilities shall not be discriminated against based solely on their disability.

Section 504 requires organizations to provide “reasonable accommodations” for individuals with disabilities to perform the essential functions of the job. This includes making video accessible through closed captioning.

Section 508

Section 508 applies to:

- The Federal Government

- State governments through “little 508s”

- States who receive federal funding through the Assistive Technology Act

Don’t have a closed captioning budget? It might be time to create one.

Having a closed captioning budget will help you stay on top of how much you are closed captioning, as well as ensure that you are using your funds efficiently.

There are many creative ways you can use to find the money for closed captioning.

If you are an educational institution, you can insert a closed captioning fee into the student tuition fees. This strategy has helped NC State raise over $60,000 a year for closed captioning.

If you have leftover funds from other budget initiatives, you could use those to help create a closed captioning budget as well.

The key is to assert that you are making a commitment to closed captioning.

The Americans with Disabilities Act

The Americans with Disabilities Act (ADA) was created to ensure equal opportunity for people with disabilities.

Title II and Title III of the ADA relate to web accessibility for state, local, public and private entities.

The ADA was intended to apply to physical structures, but through legal action, it has been extended to online content.

Note: Religious organizations are exempt from complying with the ADA.

Title II

Title II applies to “public entities” such as:

- State and local governments including its

- departments

- agencies

- other instrumentalities (for example public meeting halls, airports, and police stations)

- Activities, services, and programs of public entities

Local government, state government, private colleges, and public colleges note in Title II of the ADA. Title II of the ADA has also been applied to private entities. Under the Title, employee training videos must also comply with the ADA.

FedEx Lawsuit and the ADA

In 2013, FedEx was sued by the U.S. Equal Employment Opportunity Commission for violating the ADA. FedEx failed to provide ASL interpreters and closed captioning employee training videos. FedEx was ordered to pay a $3.3 million settlement and provide ASL interpretation and closed captions.

Title II of the ADA protects individuals from discrimination by public entities. It requires organizations to provide equal alternatives for communication when necessary. For example, public entities must provide auxiliary aids, unless they alter the “nature of the service, program, or activity” or cause an undue financial and administrative burden. However, if auxiliary aids are unavailable, then they must provide an alternative method for effective communication.

Title III

Title III applies to “places of public accommodation” such as:

- Public entities (ex: public venues, public transportation)

- Private entities (ex: private universities, food services, entertainment)

Title III protects individuals from discrimination by private entities.

Title III of the ADA relates to private entities, like private businesses and private colleges.

Under Title III, individuals with disabilities are entitled to the full and equal enjoyment of goods, services, facilities, or accommodations in any public place.

Netflix Lawsuit and the ADA

In 2011, the National Association of the Deaf filed a lawsuit against Netflix for failing to provide closed captioning for shows and movies on its streaming service. The court ruled that although was a website and not a physical location, it was still a “place of public accommodation” under the ADA and therefore needed to make its services accessible.

21st Century Communications and Video Accessibility Act

The CVAA applies to:

- Broadcast TV

- Faith organizations

- Streaming Media

The 21st Century Communications and Video Accessibility Act (CVAA) states that all online video previously aired on television is required to have closed captioning (including clips and montages). Captions must be “at least the same quality as when such programs are shown on television.”

If your video content has never aired on television (like a vlog on YouTube), this act does not apply to you.

Video creators and content distributors are responsible for ensuring their content is properly captioned.

Streaming sites like Netflix, Hulu, and Amazon, must caption all content that was previously aired on television. Note: Under the ADA, streaming sites must also caption original content, even if it never appeared on television.

Faith organizations that broadcast their sermons must also caption them when posted online.

The CVAA also requires live captioning for live programming.

In addition, captions must meet the FCC’s caption quality requirements, which cover caption accuracy, timing, completeness, and placement.

The Federal Communications Commission

The FCC applies to:

- Broadcast TV

- Faith Organizations

The Federal Communications Commission (FCC) requires broadcast content to be closed captioned.

The FCC has specific captioning standards that organizations must meet. These include caption accuracy, timing, completeness, and placement.

The FCC impacts both television and online video.

Any content broadcast on television must provide captioning for live, near-live, and recorded programming. As of 2011, religious organizations that broadcast on television are no longer exempt from the FCC’s requirements for captioning; now, any faith organizations publishing content on television must close caption under the FCC.

Broadcast organizations must also be aware of the audio description requirements under the CVAA.

FCC Closed Captioning Standards

- Accuracy: Captions must match spoken words to the fullest extent.

- Placement: Captions do not block important visual content.

- Completeness: Captions run from the beginning to the end of the program.

- Synchronization: Captions align with audio track and are shown at a reasonable pace.

State Laws

State laws apply to:

- Public Colleges

- Private Colleges

- State Government

- Municipalities

- K-12

Many states have enacted their own accessibility laws. Several states have even created “mini 508s” which require institutions to comply with Section 508 of the Rehabilitation Act.

Educational institutions, state governments, and local governments should all be mindful of their state accessibility laws.

In addition, these institutions must be mindful of other accessibility laws that apply to them. Private and public colleges, state governments, municipalities, and K-12 must also adhere to the Rehabilitation Act and the ADA.

Conclusion

By 2019, 80% of all online traffic will be video. In fact, Hubspot reported that 54% of consumers want to see videos from brands they support.

Video content is everything right now, which is why making it accessible should be your top priority. Adding closed captions not only provides greater access to people who are deaf and hard-of-hearing, but it also creates a better user experience for all viewers.

Always remember to edit ASR captions; the risk of having inaccessible captions is not worth it. Also, make sure to educate your organization on why video accessibility is important – the decision-makers are often unaware they have to.

Don’t be afraid of captioning. Whether you are legally required to, or not, closed captioning will only bring greater returns for you. Eventually, captioning will become second nature and an integral step in the video production process.

Related Resources

Beginner’s Guide to Captioning

This White Paper is designed to serve as your comprehensive beginner’s guide to all things captioning to help you easily create accessible and engaging video content.

8 Benefits of Transcribing and Captioning Online Video

This short brief explores the top 8 reasons why video transcription and closed captioning are beneficial for both your organization and your viewers.

US and International Accessibility Laws

This page covers US federal accessibility laws, US state accessibility laws, and international accessibility laws. It also breaks down accessibility laws by industry.

How to Select the Right Captioning Vendor: 10 Crucial Questions to Ask

This white paper will help you compare your closed captioning vendor options and find the right solution for you.